Perception is commonly treated as a transparent window onto the external world. Individuals assume that what they see, hear, and feel corresponds—at least approximately—to objective reality. Yet contemporary psychology and cognitive neuroscience increasingly challenge this assumption, suggesting that perception is not a passive reception of sensory input but an active, constructive process. The brain does not simply record the world; it generates models of it. This raises a profound question: can perception fabricate reality, and if so, to what extent is the experienced world a product of neural inference rather than direct observation?

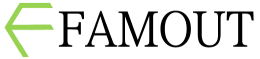

To address this question, it is essential to reconsider the nature of perception itself. Traditional views conceptualized perception as a bottom-up process in which sensory organs detect external stimuli and transmit this information to the brain for interpretation. While this framework captures part of the process, it fails to account for the brain’s predictive and interpretive functions. Contemporary models emphasize that perception arises from an interaction between bottom-up sensory signals and top-down predictions generated by the brain.

According to predictive processing theories, the brain continuously generates hypotheses about the causes of sensory input. These hypotheses are based on prior knowledge, expectations, and contextual information. Incoming sensory signals are then compared to these predictions, and discrepancies—known as prediction errors—are used to update the brain’s internal model. Perception, in this framework, is the brain’s best guess about what is happening in the world, given both prior expectations and current input.

This inferential nature of perception implies that what individuals experience is not the world itself but a constructed representation. Under normal conditions, this representation aligns closely with external reality because the brain’s predictions are calibrated through ongoing interaction with the environment. However, when the balance between prediction and sensory input becomes disrupted, perception may deviate significantly from external conditions.

One of the clearest demonstrations of perceptual fabrication occurs in the context of illusions. Visual illusions reveal that the brain can generate coherent perceptions that do not correspond to physical reality. These illusions are not errors in the sense of malfunction; rather, they reflect the brain’s reliance on assumptions that are generally adaptive but occasionally misleading. For example, the brain assumes continuity, depth, and lighting conditions when interpreting visual scenes. When these assumptions are manipulated, perception follows the model rather than the actual stimulus.

Beyond simple illusions, more complex forms of perceptual fabrication emerge in hallucinations. Hallucinations involve the experience of sensory events in the absence of corresponding external stimuli. These experiences can occur in various modalities, including vision, hearing, and touch. Unlike illusions, which distort real input, hallucinations represent the generation of perceptual content without external triggers.

From a predictive processing perspective, hallucinations may arise when top-down predictions become excessively strong relative to bottom-up sensory input. In such cases, the brain’s expectations dominate perception, effectively overriding the absence of external signals. The resulting experience feels real because it is processed through the same neural pathways as ordinary perception.

This mechanism highlights a critical feature of perception: the brain does not inherently distinguish between internally generated and externally derived information. Instead, it relies on contextual and probabilistic cues to infer the source of sensory content. When these inferential processes fail, internally generated signals may be misattributed as external, leading to hallucinations.

Perceptual fabrication is not limited to pathological conditions. Even in everyday life, perception is shaped by expectations, beliefs, and context. For example, ambiguous stimuli can be interpreted in multiple ways depending on prior knowledge. A sound may be perceived as threatening or harmless based on the listener’s expectations. Similarly, visual perception can be influenced by context, leading individuals to see patterns or objects that align with their expectations.

Emotion further modulates perception. Emotional states can bias the interpretation of sensory input, amplifying certain features while attenuating others. Anxiety, for instance, may heighten sensitivity to potential threats, leading individuals to perceive neutral stimuli as dangerous. This bias does not merely affect interpretation after perception; it influences the perceptual process itself.

The integration of emotion and perception suggests that reality, as experienced, is not purely sensory but affectively constructed. The world appears not only as it is but as it matters to the organism. This affective dimension introduces variability into perception, as different individuals may experience the same environment in different ways depending on their emotional states.

Memory also contributes to perceptual construction. The brain uses past experiences to inform current perception, filling in gaps and resolving ambiguities. This reliance on memory allows for efficient processing but also introduces the possibility of distortion. Perception becomes a synthesis of present input and past experience, blurring the boundary between perception and memory.

In some cases, this synthesis can lead to confabulation, where individuals generate coherent but inaccurate perceptions or memories without awareness of their inaccuracy. Confabulation illustrates how the brain prioritizes coherence over accuracy, constructing plausible interpretations even when information is incomplete or inconsistent.

The concept of reality itself becomes complex in light of these processes. If perception is inherently constructive, then the experienced world is always mediated by neural processes. This does not imply that external reality does not exist, but rather that access to it is indirect. The brain constructs a model of the world that is useful for action and survival, not necessarily one that perfectly mirrors objective conditions.

This functional perspective suggests that perception is optimized for utility rather than accuracy. The goal of perception is to guide behavior effectively, not to provide a veridical representation of the environment. As long as the constructed reality supports adaptive action, minor deviations from objective reality may be inconsequential.

However, when perceptual fabrication becomes too pronounced, it can lead to significant difficulties. In psychiatric conditions such as psychosis, the boundary between internal models and external reality may become severely disrupted. Individuals may experience perceptions or beliefs that are not shared by others, leading to challenges in communication and functioning.

These conditions highlight the importance of calibration between prediction and sensory input. A stable perception of reality depends on the brain’s ability to balance these components, adjusting predictions in response to evidence while maintaining coherent models of the world.

The social dimension of perception also plays a critical role. Humans rely on shared reality to coordinate behavior and communicate effectively. Language, cultural norms, and collective knowledge provide frameworks that align individual perceptions with those of others. This alignment reinforces the sense that perception corresponds to an objective world.

When this alignment breaks down, individuals may feel isolated or disconnected from others. Differences in perception can lead to misunderstandings or conflict, particularly when individuals are unaware that their experiences are constructed differently.

Technological environments introduce new challenges to the stability of perceived reality. Virtual and augmented realities can create immersive experiences that mimic or alter sensory input. These technologies demonstrate how easily perception can be shaped by controlled stimuli, further emphasizing its constructive nature.

At the same time, they raise questions about the criteria by which reality is defined. If experiences generated by artificial systems can feel indistinguishable from those generated by the physical world, the distinction between real and fabricated becomes less clear at the level of subjective experience.

Despite these complexities, the brain maintains mechanisms for grounding perception. Sensory feedback, error correction, and social validation help align internal models with external conditions. These mechanisms allow individuals to function effectively within their environments, even if perception is not perfectly accurate.

Ultimately, the question of whether perception fabricates reality does not yield a simple yes or no answer. Perception does not create reality ex nihilo, but it actively constructs the form in which reality is experienced. The world as perceived is a synthesis of external input and internal inference, shaped by prediction, emotion, memory, and context.

This understanding reframes the nature of experience. Rather than passively receiving the world, individuals participate in its construction at every moment. The reality they inhabit is not a direct imprint of the external environment but a dynamic model generated by the brain.

In this sense, perception does not merely reveal reality—it continually fabricates the version of reality that the mind inhabits.